Seeing depth in the brain

Professor Andrew J. Parker, Professor of Neuroscience, Fellow and

Principal Bursar of St John's, studied Natural Sciences at the

University of Cambridge before moving to Oxford with a Beit Memorial

Fellowship in 1985. His research explores many aspects of spatial vision

and the neuronal mechanisms of perceptual decisions, but especially

concentrates on the neurophysiology and neuro-imaging of stereoscopic

vision. He has held a Leverhulme Senior Research Fellowship and a

Wolfson Merit Award from the Royal Society and recently held a

Presidential International Fellowship from the Chinese Academy of

Sciences.

Professor Andrew J. Parker, Professor of Neuroscience, Fellow and

Principal Bursar of St John's, studied Natural Sciences at the

University of Cambridge before moving to Oxford with a Beit Memorial

Fellowship in 1985. His research explores many aspects of spatial vision

and the neuronal mechanisms of perceptual decisions, but especially

concentrates on the neurophysiology and neuro-imaging of stereoscopic

vision. He has held a Leverhulme Senior Research Fellowship and a

Wolfson Merit Award from the Royal Society and recently held a

Presidential International Fellowship from the Chinese Academy of

Sciences.

Professor Parker is currently delivering the Physiological Society’s G L Brown Prize Lectures, a series specifically set up to promote interest in the experimental aspects of physiology. With lectures at the universities of London, York and Cardiff completed, three are to follow: Sheffield (26 October), Edinburgh (15 November) and Oxford (23 November).

Professor Parker writes:

‘Stereo vision is one of the glories of nature and a paradigm of how other parts of the mind might work’ wrote Steve Pinker in his book How the mind works. I can’t claim to have written this inspiring sentence myself, but I can at least claim to have chosen to work on stereo vision well before Steve Pinker wrote his sentence. Stereo vision is the use of two eyes in co-ordination to give us a sense of depth, a pattern of 3D relief or 3D form that emerges out of the 2D images arriving at the left and right eyes. These images are captured by the light-sensitive surface of the eye, called the retina.

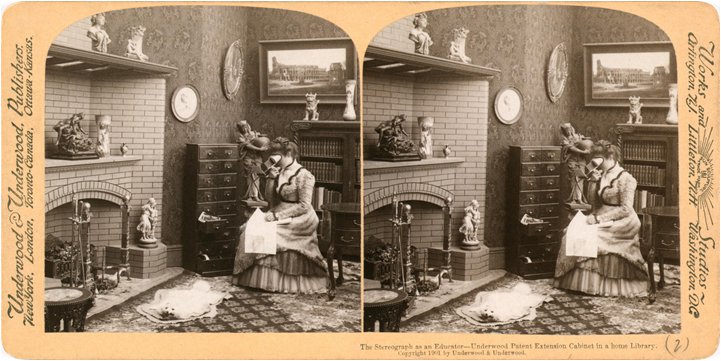

Figure 1: 'The stereograph as an educator', illustrating the virtual reality technology of the Victorian era.

Figure 1: 'The stereograph as an educator', illustrating the virtual reality technology of the Victorian era.

The GL Brown lecture from the Physiological Society was set up specifically to promote interest in the experimental aspects of physiology. On the last Friday of September, I set off to the University of York to give the second in the series of these lectures that will take me around the UK (to London –already gone – Cardiff, Sheffield, Edinburgh and Oxford). With predecessors, such as Colin Blakemore and Semir Zeki, it is a tall order: they are not only at the very top scientifically but also superb communicators. It’s also a nice touch that Sir Lindor Brown’s career took him around the country, Cambridge, Manchester, Mill Hill and central London, before he became Waynflete Professor in my own department in Oxford. The other pleasurable coincidence of giving these lectures this year is that it is 50 years since fundamental discoveries were made about how the brain combines the information from the two eyes to provide us with a sense of depth.

What causes people like Pinker who are outside the field to get so excited about stereo? Partly it’s just the experience itself. If you’ve been to a 3D movie or put on a virtual reality headset, almost all of you will have the sense of stereoscopic depth. It is vivid and immediate. A few in the audience will be disappointed because there are specific clinical conditions where stereoscopic depth is lost. Most are however captivated. Clinical loss typically occurs in cases where there has been a problem with eye co-ordination in early childhood. In such cases, the eyes are not correctly aligned and the brain grows up during a critical, developmental period with uncoordinated input from the two eyes, leading to a loss of stereoscopic depth perception. The other thing that excites Pinker is the way in which the brain is able to create a sense of a third dimension in space out of what are fundamentally two flat images. As a scientific problem, this is fascinating.

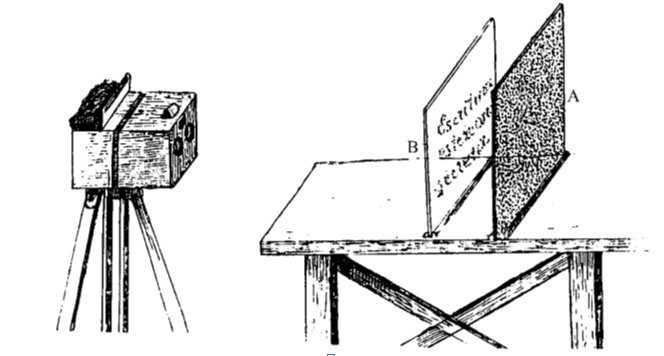

Figure 2: Ramon y Cajal's secret stereo writing, see Text Box

Figure 2: Ramon y Cajal's secret stereo writing, see Text Box

- Secret Stereo Writing.

The great Spanish neuroanatomist Ramon y Cajal developed this technique for photographically sending a message in code. The method uses a stereo camera with two lenses and two photographic plates on a tripod at the left. Each lens focuses a slightly different image of the scene in front of the camera. The secret message is on plate B, whereas plate A contains a scrambled pattern of visual contours, which we term visual noise. The message is unreadable in each of the two photographic images taken separately because of the interfering visual noise. Each photograph would be sent with a different courier. When they arrive, viewing the pair of photos with a stereograph device as in Figure 1, the message is revealed because it stands out in stereo depth, distinct from the noisy background. Cajal did not take this seriously enough to write a proper publication on his idea: “my little game…is a puerile invention unworthy of publishing”. He could not guess that this technique would form the basis of a major research tool in modern visual neuroscience.

Our lab is investigating the fundamental basis of stereoscopic vision by recording from nerve cells in the visual cortex. One of the significant technical developments we use is the ability to record from lots of nerve cells simultaneously. Using this technique, I am excited to be starting a new phase of work that aims to identify exactly how the visual features that are imaged into the left eye are matched with similar features that are present in the right eye. The neural pathways of the brain first bring this information together in the primary visual cortex. Remarkably there are some 30 additional cortical areas beyond the primary cortex, all in some way concerned with vision and most of them having a topographic map of the 2D images arriving on the retinas of the eyes. The discovery of these visual areas started with Semir Zeki’s work in the macaque monkey visual cortex and our work follows that line by recording electrical signals from the visual cortex of these animals. To achieve this, we are using brain implants in the macaques, very similar to those being trialled for human neurosurgical use.

Figure 3: a very odd form of binocular vision in the animal kingdom. The young turbot grows up with one eye on each side of the head like any other fish, but as adulthood is reached one eye migrates anatomically to join the other on the same side of the head. It is doubtful whether the adult turbot also acquires stereo vision.

Figure 3: a very odd form of binocular vision in the animal kingdom. The young turbot grows up with one eye on each side of the head like any other fish, but as adulthood is reached one eye migrates anatomically to join the other on the same side of the head. It is doubtful whether the adult turbot also acquires stereo vision.

My lab is currently interested in how information passes from one of visual cortical areas to another. Nerve cells in the brain communicate with a temporal code, which uses the rate and timing of impulse-like events to signal the presence of different objects in our sensory world. When information passes from one area to another, the signals about depth get rearranged. The signals successively lose features that are unrelated to our perceptual experience and acquire new properties, corresponding more closely to perceptual experience but not observed in earlier visual areas. So, these transformations eventually come to shape our perceptual experience.

In this phase of work, we are identifying previously unobserved properties of this transformation from one cortical area to another. We are examining how populations of nerve cells use co-ordinated signals to allow us to discriminate objects at different depths. We are testing the hypothesis that the variability of neural signals is a fundamental limit on how well the population of nerve cells can transmit reliable information about depth. To be specific, we are currently following a line of enquiry inspired by theoretical analysis that identifies the shared variability between pairs of neurons (that is, the covariance of neural signals) as a critical limit on sensory discrimination. Pursuing this line is giving us new insights into why the brain has so many different visual areas and how these areas work together.

It is an exciting time. There are many opportunities created by the newer technologies of multiple, parallel recording of neural signals and the ability to intervene in neural signaling brought by the use of focal electrical stimulation and optogenetics. The prospect of applying these methods to core problems in the neuroscience of our perceptual experience is something to look forward to in the forthcoming years.